; Date: Fri Jun 22 2018

Tags: Amazon »»»» Amazon Web Services »»»» Big Brother »»»» Face Recognition »»»» Machine Learning »»»»

Among the Amazon Web Services is Rekognition, a facial recognition system running on Amazon's cloud. Anyone can sign up with the service, to have video analyzed to identify people or objects. Turns out the federal government is using this service for various tasks including deportation and detention programs run by ICE, the Immigration Control force. A group of Amazon employees have written to Amazon CEO Jeff Bezos demanding that Amazon not do as IBM did during the 1940's when IBM's systems were used by Nazi Germany to help round up the Jews.

The letter (below) cites a number of troubling things in recent time from the militarization of the police to the recent Trump Administration policy to separate children from parents in cases of undocumented immigrants.

The employees note that their work is being used by the federal government, and is possibly being used for human rights violations. Palantir has a contract with ICE (Immigration Control and Enforcement) to power its deportation and detention programs, and in turn Palantir has a contract with AWS to run computing infrastructure.

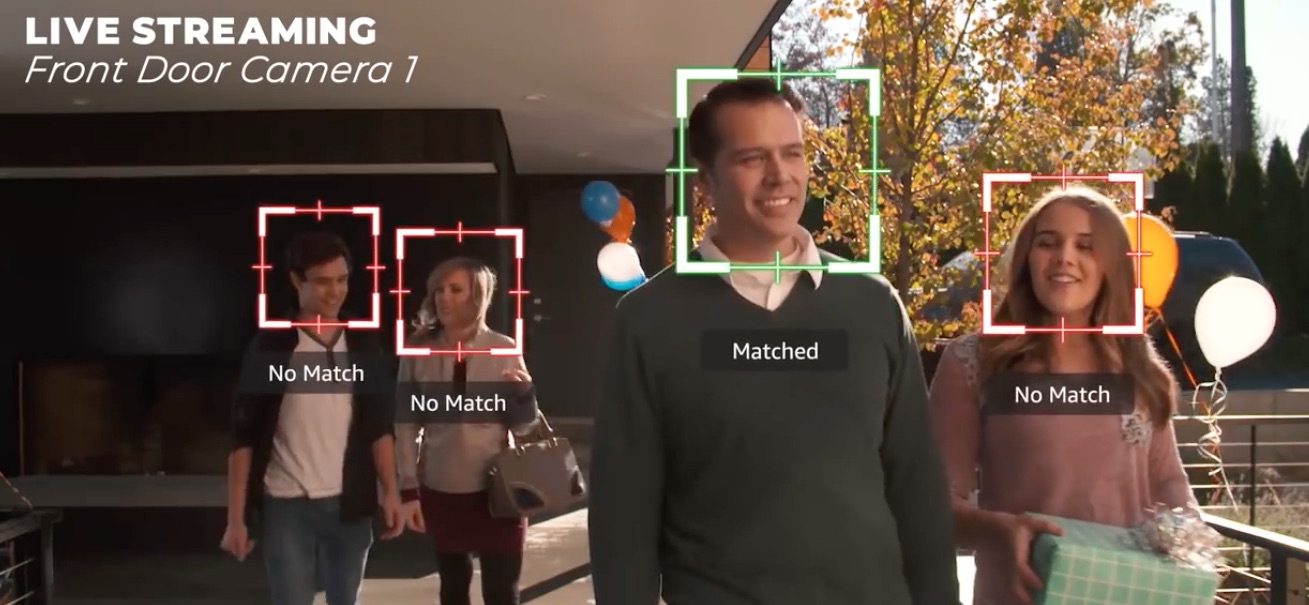

According to Amazon's advertising video for its Rekognition service (see below), the service provides rich metadata and object detection in live or recorded video streams. The metadata can be used in "search and filtering". The service is powerful, such as recognizing folks whose face is partly hidden, or tracking when a person leaves and then returns to the video field of view.

While many of the scenarios in the video are benign - for example a front door camera system, to identify folks approaching your house, to identifying people and objects in a video that might be posted to social media. But at the 1:40 mark in the video it talks about using this for law enforcement, to "track persons of interest" from a collection of 10's of millions of faces.

Curiously -- as the narrator says "unsafe content detection" the video shows the face of a black man. Why? That man is David Ortiz, who appears to be a baseball player with the Red Sox.

In May, MSNBC had a video report discussing the obvious Big Brother aspects of the fact that several police agencies are using Rekognition in law enforcement.

In November, Amazon published a video describing the Butterflye service which has used AWS Rekognition to develop a security camera system for businesses.

That video demonstrates an issue with having AWS deny Palantir the use of the Rekognition service -- that any company can develop a similar service. Video recognition software is available in the open source, and for example Palantir surely has the staff capable of building their own instance of such software.

A company using the AWS Rekognition service can save a lot of money, therefore get to market faster, because Amazon has already set up the service.

In May, the ACLU released a report detailing how Amazon is collaborating with the federal government:

Amazon Teams Up With Government to Deploy Dangerous New Facial Recognition Technology

Dear Jeff,

We are troubled by the

recent report from the ACLU exposing our company’s practice of selling AWS Rekognition, a powerful facial recognition technology, to police departments and government agencies. We don’t have to wait to find out how these technologies will be used. We already know that in the midst of historic militarization of police, renewed targeting of Black activists, and the growth of a federal deportation force currently engaged

in human rights abuses — this will be another powerful tool for the surveillance state, and ultimately serve to harm the most marginalized. We are not alone in this view: over 40 civil rights organizations signed an open letter in opposition to the governmental use of facial recognition, while over 150,000 individuals signed another petition delivered by the ACLU.

We also know that Palantir runs on AWS. And we know that ICE relies on Palantir to power its detention and deportation programs. Along with much of the world we watched in horror recently as U.S. authorities tore children away from their parents. Since April 19, 2018 the Department of Homeland Security has sent nearly 2,000 children to mass detention centers. This treatment goes

against U.N. Refugee Agency guidelines that say children have the right to remain united with their parents, and that asylum-seekers have a legal right to claim asylum. In the face of this immoral U.S. policy, and the U.S.’s increasingly inhumane treatment of refugees and immigrants beyond this specific policy, we are deeply concerned that Amazon is implicated, providing infrastructure and services that enable ICE and DHS.

Technology like ours is playing an increasingly critical role across many sectors of society. What is clear to us is that our development and sales practices have yet to acknowledge the obligation that comes with this. Focusing solely on shareholder value is a race to the bottom, and one that we will not participate in.

We refuse to build the platform that powers ICE, and we refuse to contribute to tools that violate human rights.

As ethically concerned Amazonians, we demand a choice in what we build, and a say in how it is used. We learn from history, and we understand how

IBM’s systems were employed in the 1940s to help Hitler. IBM did not take responsibility then, and by the time their role was understood, it was too late. We will not let that happen again. The time to act is now.

We call on you to:

- Stop selling facial recognition services to law enforcement

- Stop providing infrastructure to Palantir and any other Amazon partners who enable ICE.

- Implement strong transparency and accountability measures, that include enumerating which law enforcement agencies and companies supporting law enforcement agencies are using Amazon services, and how.

Our company should not be in the surveillance business; we should not be in the policing business; we should not be in the business of supporting those who monitor and oppress marginalized populations.

Sincerely,

Amazonians